Forecasts suggest that only the sixth-generation High Bandwidth Memory (HBM4) from SK Hynix and Samsung Electronics will be integrated into Nvidia’s next-generation superchip, Vera Rubin. With Micron seemingly out of the HBM4 supply chain, the two South Korean semiconductor giants are poised to divide the HBM market for Vera Rubin.

On February 7, semiconductor analysis firm SemiAnalysis reported, “There is no evidence of Nvidia placing HBM orders with Micron,” projecting Micron’s HBM share in Vera Rubin to plummet to 0%. They forecast SK hynix capturing roughly 70% of the HBM4 supply, with Samsung Electronics securing the remaining 30%.

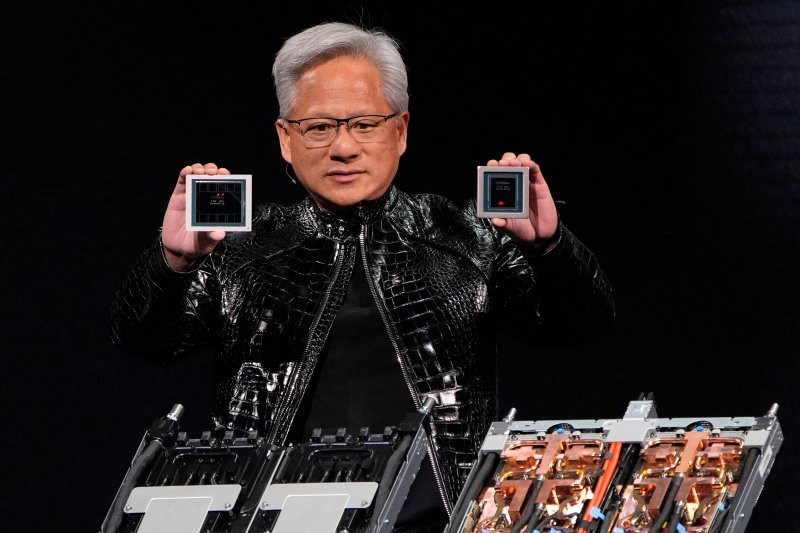

The Vera Rubin system integrates 36 Vera CPUs and 72 Rubin GPUs into a single powerhouse. It boasts a fivefold increase in inference performance compared to current Blackwell-based products, reduces per-token costs by 90%, and requires only a quarter of the GPUs for model training. This system is expected to be released late this summer in the form of the VR200 NVL72 rack-scale solution.

Engineered for large-scale AI model operations, Vera Rubin hinges on HBM4-based ultra-high-bandwidth memory. Some reports suggest that Micron, unlike its Korean counterparts, failed to secure HBM4 orders for Vera Rubin.

However, industry watchers suggest Micron might offset this setback by supplying LPDDR5X memory. Some observers speculate that Micron could provide substantial LPDDR5X memory for the Vera CPUs within the Vera Rubin ecosystem.

Insiders point to Nvidia’s aggressive memory specification upgrades as a key factor in supplier selection. Nvidia initially targeted a 13TB/s memory bandwidth for the VR200 NVL72 system in March 2025, but dramatically raised this to 20.5TB/s by September. At CES 2026, they revealed the system operating at 22TB/s – a near 70% jump from the original goal.

This specification escalation severely tests memory manufacturers’ technological prowess and yield management. Currently, only Samsung and SK hynix are deemed capable of meeting the stringent HBM4 requirements.